Quantum is the Next Data Center Transition

The data-center industry has spent the past five years rebuilding itself for AI. The power drawn by a single rack has climbed from 10 kW to more than 50 kW, with the most aggressive hyperscale designs quoting over 100 kW. Where liquid cooling was once an exotic feature, it is now part of the baseline. A 2024 analysis funded by the US Department of Energy put the electricity use of data centers at roughly 4.4% of the national total in 2023, with projections of up to 12% by 2028.

Every hyperscaler is drawing up site roadmaps and next-generation campus designs to meet that curve. But a second shift is coming behind the AI build-out, and most data-center roadmaps don’t yet reflect it. Quantum computing is about to start asking the same questions of data-center design that AI did five years ago.

Lessons from history

In the 19th century, factories produced their own power. Every mill had a steam plant or a waterwheel, and the entire building was organized around it. When the electric grid arrived, the gains came only once the factory itself had been redesigned around distributed electric motors. That took nearly 40 years. The productivity boom of the 1920s was the payoff from factories that were built natively for the new paradigm, not the ones that were retrofitted from the old one. Check out this great essay for more on that transition.

A more recent version of the same story ran the other way.

The transition of GPUs from specialist hardware to the most important component in the rack happened in under a decade. And it happened fast because GPUs slotted into the rack architecture that already existed. Operators didn’t have to redesign their facilities to start adopting GPUs. The subsequent wave of AI-driven redesign has been substantial, but it’s been an evolution of the existing rack paradigm, not a replacement of it.

The difference between these two stories isn’t the quality of the technology. It’s how much each one required the surrounding world to change. Electrification was transformative, but it demanded a generation of rebuilding before the benefits arrived, whereas the advantages of GPU acceleration arrived much faster. The more a new technology demands the infrastructure around it be rebuilt, the longer society waits for the payoff. The more it can slot into what already exists, the faster the payoff arrives.

What this means for quantum

That same logic applies to quantum. Architectures that integrate easily into data centers will do so on roughly the same timeline of the GPU transition — years, not decades. Architectures that require dedicated facilities will integrate on a timeline closer to electrification. Both paths could eventually produce useful quantum computing, but they won’t realize its impact at the same speed.

For data-center operators, that timeline difference is the point. And the decisions you’re making now about the capacity of a new facility will determine which of these timelines your center can actually capture.

The only way is hybrid

Most visions of utility-scale quantum computers involve room-sized or warehouse-sized systems similar to the ENIAC of the 1940s. But an alternative is emerging: quantum processors designed from the outset as rack-mounted accelerators that are sized, cooled, and powered to sit next to GPUs.

Why is this level of integration so important? Because the quantum layer is an accelerator that only delivers value when tightly coupled to classical compute. Classical systems handle control, orchestration, error correction, data preparation, and the overwhelming majority of the compute around any quantum workload. The value of the quantum slice is realized by how cleanly it integrates with the classical stack around it.

The industry is starting to move in this direction. NVIDIA NVQLink is designed to couple quantum processors directly to GPUs and CPUs, signaling that data-center-integrated quantum is becoming a serious design priority. Diraq is a launch partner for NVQLink, and our roadmap is built end-to-end for this future.

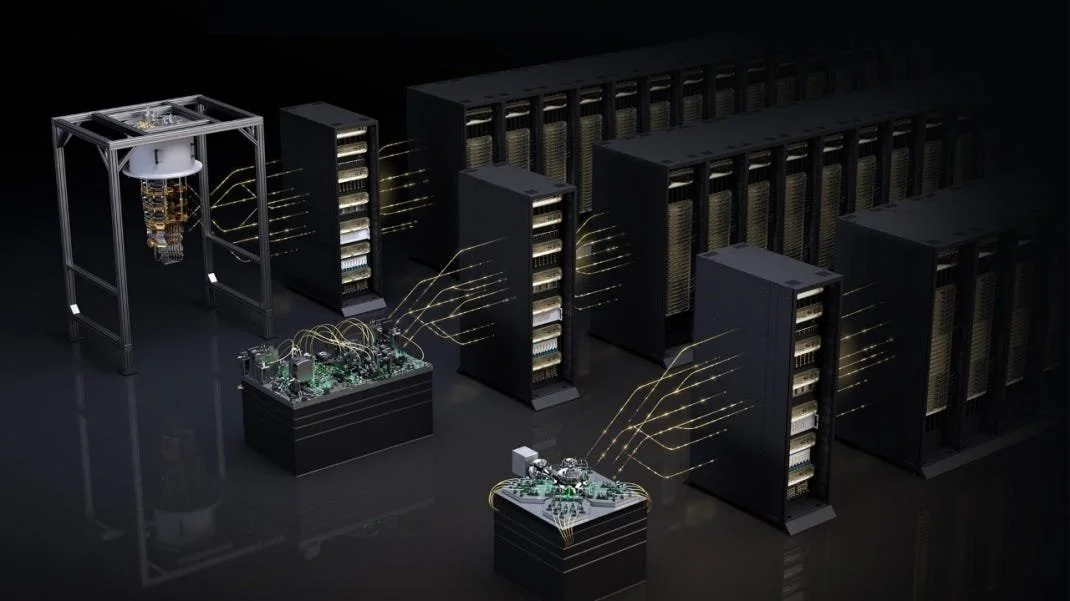

Image credit: NVIDIA

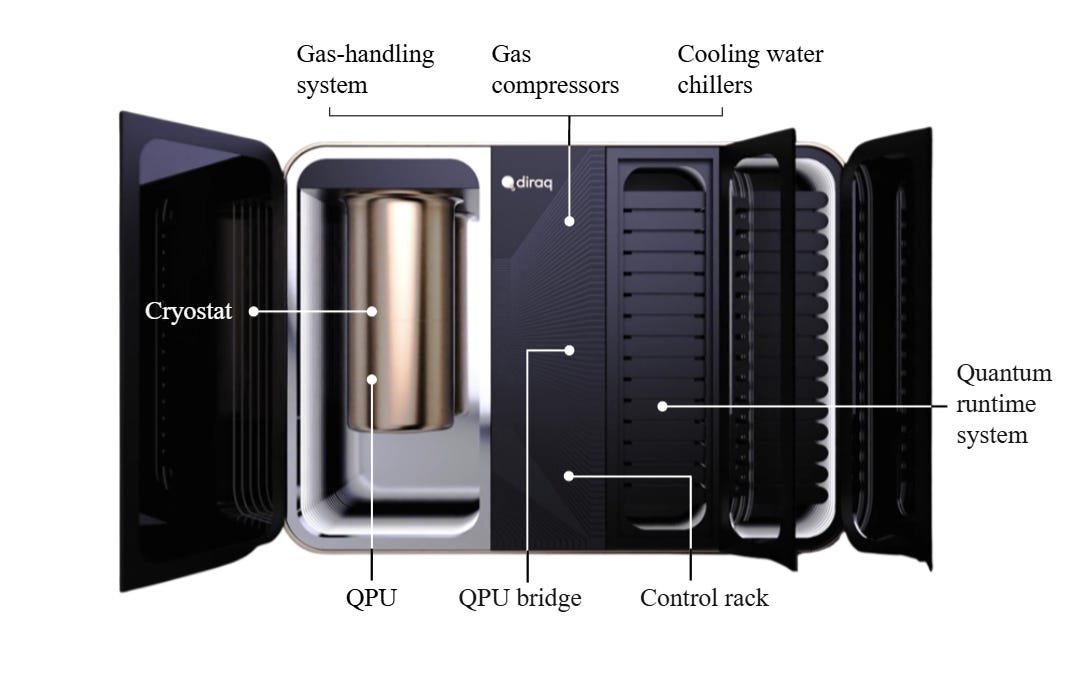

As well as integrating NVQLink, we’re working with Dell on the systems engineering that sits around the quantum processor: the racks, the classical control infrastructure, and the deployment packaging that turns a quantum processor into something a data center can install.

A decision for every data-center operator

Quantum-computing architectures differ by orders of magnitude across every planning dimension. For the same computational output, estimates of total power required range from around 100 kW for a rack-scale system to hundreds of megawatts for a warehouse-scale system. All approaches will require some form of cryogenic cooling (not just the familiar liquid cooling used for modern GPUs) and this will need to be delivered by dilution refrigerators that are not part of standard data-center infrastructure.

A dedicated quantum facility is a serious commitment. It’s the kind of project an operator takes on when they’re convinced a specific architecture will win, and they’re willing to bet a site, a grid connection, and years of construction on that conviction. In this respect, a rack-scale quantum computer is a much easier commitment than one that fills a warehouse. Doing nothing is not a neutral stance either: it’s an implicit assumption that quantum computers will eventually look like standard racks, and that existing facilities will accommodate them.

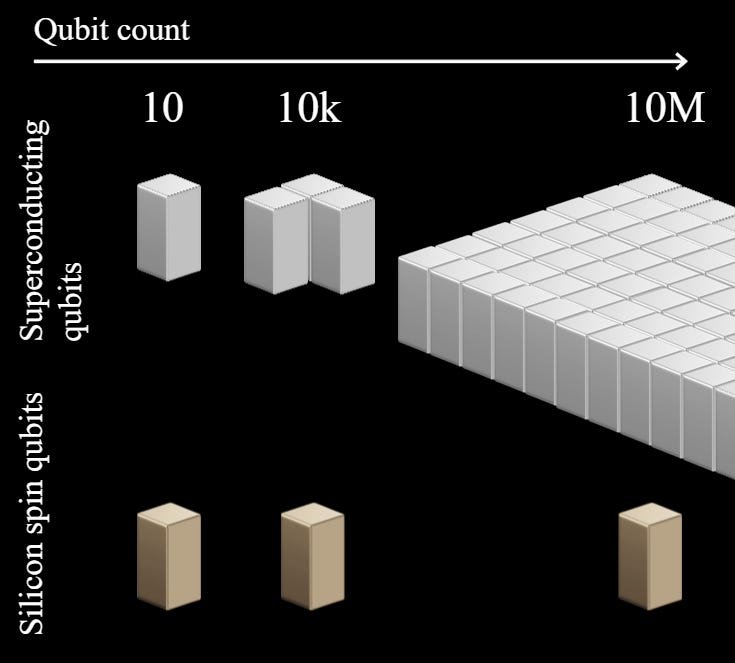

There’s a scaling argument worth discussing here. Quantum computers today are computationally small: they contain hundreds of qubits, and run toy workloads. Most systems being piloted in data centers now are nothing like they will be when they reach utility scale. These architectures will scale by changing form dramatically, with much larger demands on space, power, and cooling.

But a handful (including Diraq’s) will scale by increasing the number of qubits on a single chip, the way classical computing did. This means that performance per watt, per area, and per dollar improves over time rather than degrades. In Diraq’s case, prototype systems today look fundamentally similar to the ones that will be built in 5 years, so lessons learned about integration carry forward to utility scale.

What happens if you wait

There is growing evidence that utility-scale quantum computers will be delivered by the end of the decade. The standards are being written now. The manufacturing partnerships are being signed now. The early deployments are being scoped now.

At a minimum, every capex pipeline currently being drafted should carry a named assumption about quantum:

Which form factor is most likely to matter for the portfolio

Which cryogenic requirements would need to be accommodated

How much capex and opex to reserve

Which sites are ideal hosts