Scaling Quantum While Avoiding Energy Crises

The power draw of a single AI rack has climbed from roughly 10 kW to more than 100 kW in less than a decade. 1 MW racks will be a reality from 2028. Trillions of dollars are being spent to feed the insatiable demands of AI, and data centers are on track to account for over half the growth in US demand for electricity by 2030. Where, when, and how a new one gets built is increasingly a question of access to power.

Advanced computing has entered its infrastructure era and quantum computing is heading toward the same transition.

Today’s quantum systems are still small enough that energy efficiency is mostly treated as an engineering optimization problem. But utility-scale quantum computing changes the equation entirely. Once systems scale to commercially useful workloads, the limiting factor is no longer just the processor. It is the total infrastructure required to support it.

And different quantum architectures scale very differently.

Some approaches increase capability by adding more infrastructure around the processor: larger cryogenic systems, more control hardware, more physical separation between components, and larger facility footprints. Other approaches aim to scale more like semiconductor computing historically has, by increasing qubit density on-chip.

In our previous Substack, Quantum is the Next Data Center Transition, we talked about how architectures that integrate cleanly into existing compute infrastructure will deploy faster than ones that require entirely new facilities. Energy is a key part of the reason.

Utility scale changes the energy equation

Small quantum systems hide infrastructure realities.

Most systems today operate with hundreds of physical qubits and run constrained workloads. At that scale, supporting infrastructure remains manageable. But fault-tolerant quantum computing changes the shape of the system entirely.

As systems scale, so do:

Cryogenic cooling requirements

Control electronics

Error correction overhead

Classical orchestration systems

Networking and interconnects

Pre- and post-processing infrastructure

The processor itself becomes only one part of the total energy footprint.

This is already visible in emerging estimates for fault-tolerant systems. Depending on the architecture, projected power requirements span an enormous range, from rack-scale deployments comparable to existing AI infrastructure through to warehouse-scale systems operating at HPC-level power consumption.

The infrastructure required to support a 100 kW system looks fundamentally different from the infrastructure required to support a 100 MW system. The Quantum Energy Initiative estimates for some utility-scale quantum computers now extend as high as 200 MW, which is 200x the power consumption that AI racks are predicted to demand by 2028. For context, 200 MW is equivalent to the electricity load of a city with around 150,000 people. Given that AI data centers are already putting pressure on the grid, such a system would be an untenable addition for most data centers.

This is not unique to quantum computing. Every major computing transition eventually runs into infrastructure constraints. The difference is that quantum computing still has time to design around them.

Quantum systems whose total overhead sits within the 1 MW envelope by 2028 will be deployable alongside AI, using the same power and cooling infrastructure. Architectures whose overhead runs an order of magnitude higher will need purpose-built facilities and dedicated power supply, competing for the same constrained substations and watersheds that the AI footprint is already crowding.

Cooling becomes part of the compute architecture

Modern AI facilities already devote a significant percentage of total energy consumption to cooling overhead alone. Depending on facility design and workload, cooling can account for roughly 40% of total data center electricity use. Questions around water scarcity are also relevant: 2 out of every 3 new data centers are built in areas that experience high water stress.

Quantum systems introduce another layer of complexity.

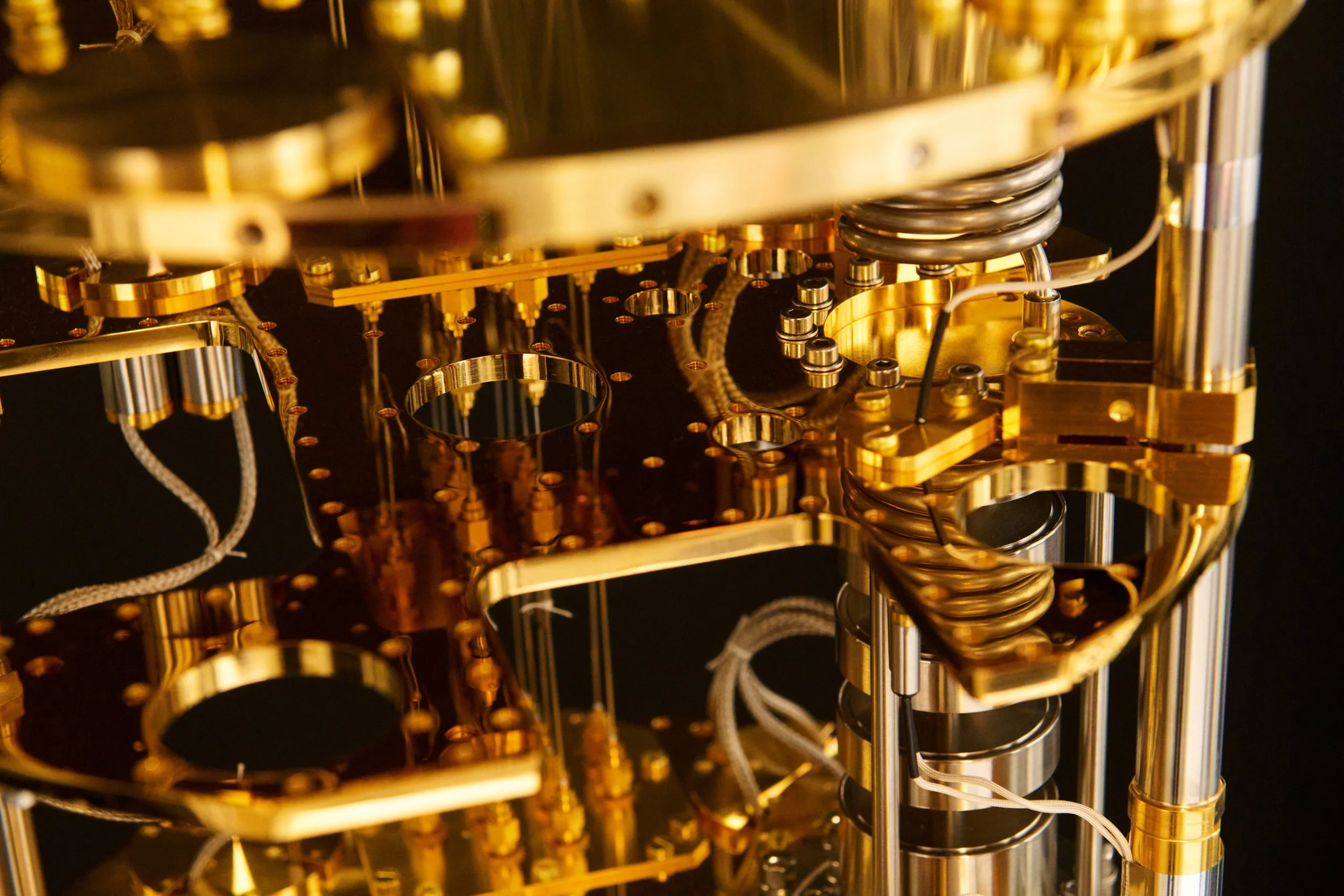

Some quantum systems must maintain temperatures that are a fraction of a degree above absolute zero — the sort of temperatures you’d encounter in deep space. Diraq’s qubits require this kind of cryogenic cooling, but they work at temperatures that are 100 times warmer than these ultracold (millikelvin) temperatures.

At utility scale, cooling architecture becomes part of the compute architecture. A commercially useful quantum computer is one where the value of the computation exceeds the cost of delivering it. That includes the cost of power, cooling, footprint, and operational infrastructure.

Different architectures create different energy futures

The energy profile of a quantum computer depends heavily on the modality itself.

Superconducting systems require operation at millikelvin temperatures, and the millions of qubits required for fault tolerance cannot be contained in a single cryogenic unit.

Trapped-ion and neutral-atom systems operate at room temperature, but they require high-powered lasers.

Photonic approaches perform operations at room temperature, but still rely on cryogenically cooled photon sources and detectors.

Spin qubits can operate at around 1 K, dramatically simplifying cooling requirements relative to millikelvin systems. And by leveraging processes honed by the semiconductor industry, millions of qubits can fit on a single chip, meaning fault-tolerant computing requires just one cryogenic unit, operating at 1 K.

The challenge is not simply the power draw of the quantum processor itself, but the total system required to support useful computation. This is where silicon changes the equation.

Quantum computing could help solve the energy problem itself

There is reason to be optimistic about quantum computing’s long-term role in solving some of our toughest energy challenges. Many of the most commercially important quantum applications involve materials, chemistry, and optimization problems tied directly to industrial energy use.

One promising area is materials discovery. Quantum systems could accelerate the search for metal-organic frameworks (MOFs) used in carbon capture and hydrogen storage. They may also improve catalyst design for industrial chemistry and fertilizer production, where even small efficiency gains can have enormous energy implications.

Take ammonia production. The process currently consumes roughly 2% of global energy production. Researchers are particularly interested in FeMoco, the enzyme used by certain bacteria to convert nitrogen into ammonia naturally. Simulating systems like FeMoco could eventually help develop fertilizer production methods that operate at lower temperatures and pressures, dramatically reducing energy consumption.

Another major opportunity is superconductivity.

Superconducting materials can transmit electricity with near-zero losses. The problem is temperature. Today’s materials only superconduct under extremely cold conditions, limiting practical deployment. Quantum systems may help identify materials capable of superconducting at far higher temperatures, potentially improving the efficiency of future power grids.

Optimization is another key category. Quantum computing could eventually help reduce waste across logistics, manufacturing, transport, and energy systems while improving the efficiency of large-scale simulations used in climate and industrial modeling.

AI models are being used today to try to tackle some of these problems, but in many cases, accurate training data cannot be produced in a reasonable amount of time with classical hardware. Instead, we use approximations, and the energy expenditure to run these models is still significant. Quantum computing will be better placed to generate the required training data, and so a fault-tolerant quantum system could complete in days what a GPU cluster might take weeks to estimate. That gets us closer to useful solutions in a more energy-efficient way.

McKinsey estimates that quantum-enabled climate technologies could contribute more than 7 gigatons of CO2 reduction annually by 2035. But optimism about these applications needs to be paired with realism about the infrastructure required to deliver them. A 200 MW quantum system running continuously for a year would consume roughly 1.75 TWh of electricity. Using current US grid averages, that corresponds to roughly 650,000 metric tons of CO2 emissions, comparable to the annual emissions of around 150,000 cars. Such systems could therefore do more harm than good in trying to solve climate-related problems.

The quantum industry still talks about energy mostly as an optimization problem. That framing only works while systems remain small. At utility scale, energy becomes architecture. And the systems that manage that constraint most effectively may ultimately determine how quickly useful quantum computing reaches the real world.